If someone had told me to use ChatGPT to practice cloud projects at a faster pace or without feeling too much like a fraud, I would probably have dismissed it😅.

ChatGPT has been a hot topic for some time now so I decided to try it out as well. I had not fully grasped the concept of Kubernetes, and how AWS EKS was supposed to work, so I figured I could try the bot instead of the gazillion tutorials that just seem to confuse me more!

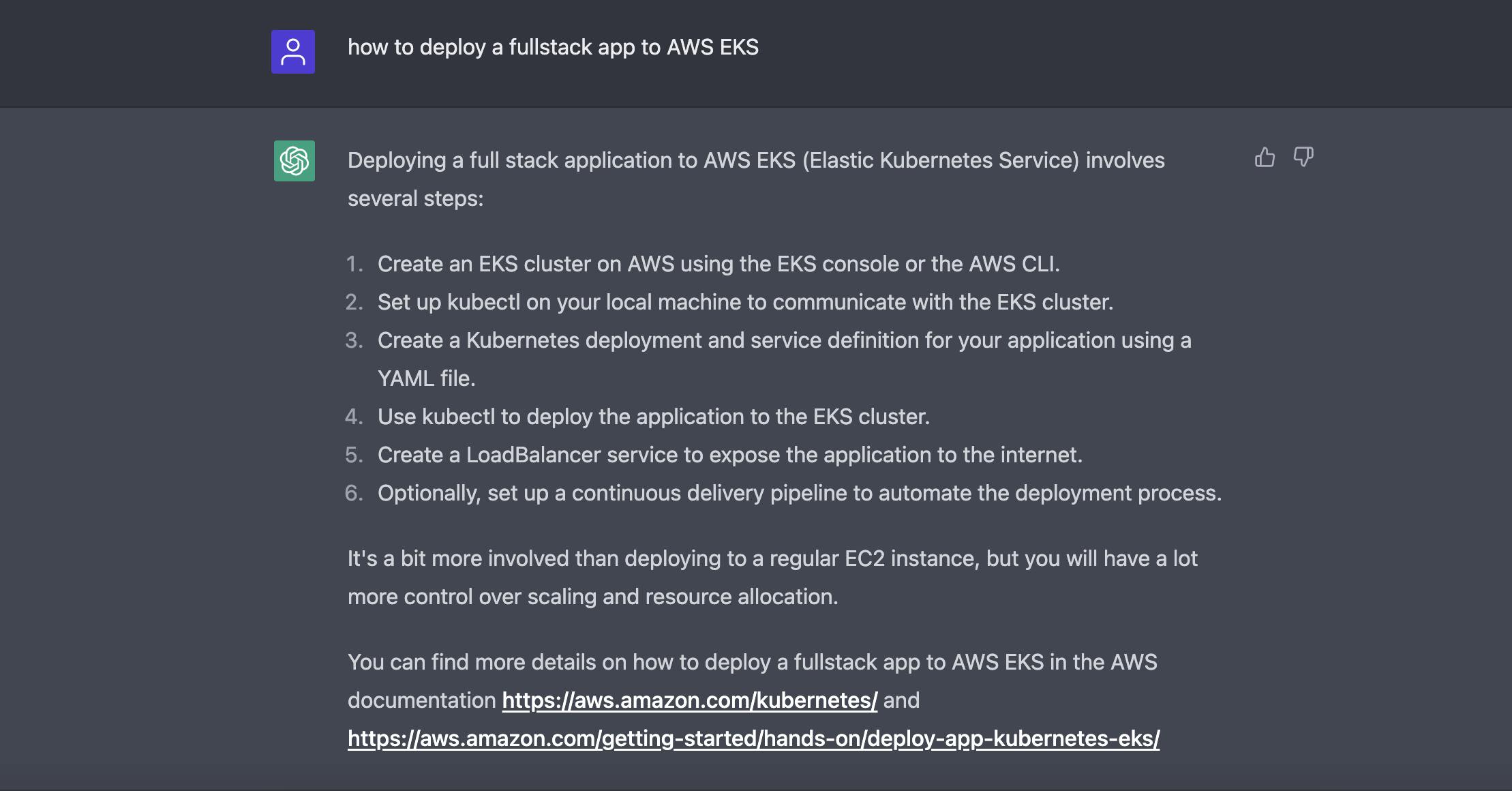

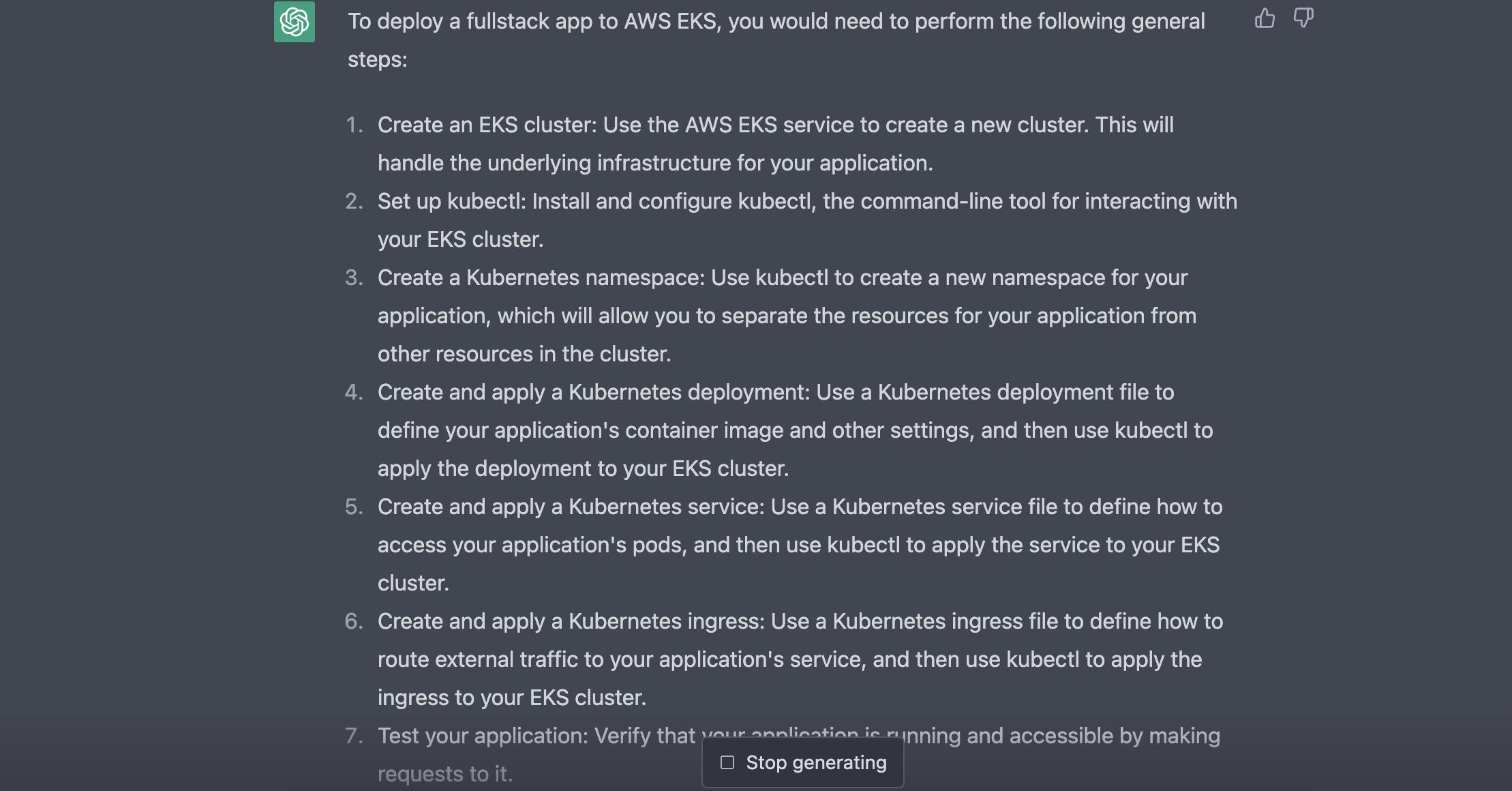

I asked ChatGPT (twice) for steps on how to deploy a full-stack app to AWS EKS and I got slightly similar answers on both occasions.

The responses provided a base for me to start and I was able to learn more about Kubernetes; what namespaces were, services, deployments of pods to nodes, etc.

In the end, I was able to work on a project that deploys three containerised apps into one EKS cluster: two in the same pod, and one in a different pod; both in one node group with worker nodes.

I deployed to Minikube first and although it took me hours of reading and understanding different aspects (and debugging!), both versions eventually worked.

Steps to Deploy Three Apps (Flask, Node and Go) to Minikube and AWS EKS clusters

I had already worked on the Dockerfiles for all three apps (using tutorials😅) and tested that they worked as expected using docker run and docker-compose up (for the two apps going into one pod).

I also built and pushed their latest Docker images to my repository, so these steps focus on how to deploy them to Minikube and AWS EKS.

These steps assume you have:

installed minikube, kubectl,

images pushed to Docker Hub and Docker desktop running, and

installed and configured aws-cli.

Deploy to Minikube

1) Start the Minikube cluster

minikube start

2) Create a deployment either using kubectl create deployment or a manifest file

apiVersion: apps/v1

kind: Deployment

metadata:

name: sample-name-deploy

spec:

replicas: 1

selector:

matchLabels:

app: sample-name

template:

metadata:

labels:

app: sample-name

spec:

containers:

- name: go-app

image: [image from repo]

- name: node-app

image: [image from repo]

Running this file with kubectl creates a deployment which launches 1 sample-name pod (replicas field is optional if you want only one pod and no replicas).

kubectl apply -f deployment.yml

3) Create a service, also either using kubectl create or a manifest file

apiVersion: v1

kind: Service

metadata:

name: sample-service

spec:

type: NodePort

selector:

app: sample-name

ports:

- name: node-api

protocol: TCP

port: 80 #nodePort defaults to this value since it's undefined

targetPort: 3000

- name: go-api

protocol: TCP

port: 9090 #nodePort defaults to this value

targetPort: 8080

Here, I'm using ports 3000 and 8080 as the ports for the service to listen for requests and pass to the appropriate container in the pod(s) created by the sample-name-deploy deployment.

kubectl apply -f service.yml

4) Check whether the pod(s) successfully started and get the name(s), and run either of the containers(apps).

kubectl get pods

kubectl port-forward pod/[pod_name_here] --address 0.0.0.0 3000:3000

You can also run the script below to run the apps in the browser using port forwarding (if you launched one pod):

export POD_NAME=$(kubectl get pods -o go-template --template '{{range .items}}{{.metadata.name}}{{"\n"}}{{end}}')

echo Name of Pod(s): $POD_NAME

#for the node app

kubectl port-forward pod/$POD_NAME --address 0.0.0.0 3000:3000

#or the go app

kubectl port-forward pod/$POD_NAME --address 0.0.0.0 8080:8080

The app(s) should be running on their respective ports. The service can also be created as a LoadBalancer type to access the apps via an external IP.

apiVersion: v1

kind: Service

metadata:

name: sample-service

spec:

type: LoadBalancer

...

However, to use this type for Minikube, a tunnel must be started to get access to an external IP for the service (else, it just shows as pending when you run kubectl get svc).

kubectl tunnel

Deploy to AWS EKS

Steps 2 and 3 remain the same but an EKS cluster (with at least one node group for worker nodes) must be created.

1) Create an EKS Cluster and node groups on the console (or use a .yaml file)

You would need to have/create an IAM role to grant access to the Kubernetes control plane (aka EKS) to manage AWS resources; the same for the node groups.

2) Configure kubectl to communicate with the cluster

aws eks update-kubeconfig --name [cluster-name]

3) Run steps 2 - 4; using port forwarding for the cluster also worked.

As mentioned earlier, you can create the service as a LoadBalancer to run the apps via an external IP. However, for AWS, this creates the legacy classic load balancer so I tried to find out how to use one of the current load balancer types instead.

The steps to use an Application Load Balancer is more involved:

Follow the steps here to create AWS Load Balancer Controller. It was a very long process for me because I used

kubectl, so you might want to tryeksctlinstead.I also had to ensure my subnets were tagged as specified in the guide to allow the load balancer "automatically pick up" resources it should pick up (and all the other requirements listed in the guide). After setting up the controller, you can create the ingress resource itself with this guide.

I ran into quite several issues and could not get it working as expected, so I plan to come back to this later.

There you have it!

I was able to successfully deploy all three apps. I still have pending issues to resolve with using an ALB instead of the default LB created by Kubernetes, but I'm positive I will find the solution soon!

P.S. One thing about this journey for me is how it may seem like there is little room to deviate and I need to follow every step of a guide to understand what is happening (or feel the full frustration of what happens when I don't follow the guide).

Should it always be like that, or am I doing something wrong? 🤔